The title of this post is a paraphrase of the famous Marshal McLuhan's 'The medium is the message', meant to imply that the medium that carries the message also embeds itself into the message, creating a symbiotic relationship with it. Of course, as I write this, I half-expect a ghost of Mr. Marshal to appear... Continue Reading →

Pushy Node.js

Last week I hoped to blog-shame Guillermo Rauch into releasing Socket.io v1.0 for my own convenience. Alas, it didn't work (gotta work on my SEO), but I see a lot of traffic from @rauchg on the corresponding GitHub project, so my spirits are high. Meanwhile, I realized that for my own dabbling, v0.91 is pretty... Continue Reading →

Socket.io and the Business of Open Source

In one of my previous posts on the topic of risk, I mentioned studies that show that investor tolerance for the stock market risk is much higher when the market is rising than when it is falling like a knife. It turned out that the risk is felt as something abstract until you actually start losing... Continue Reading →

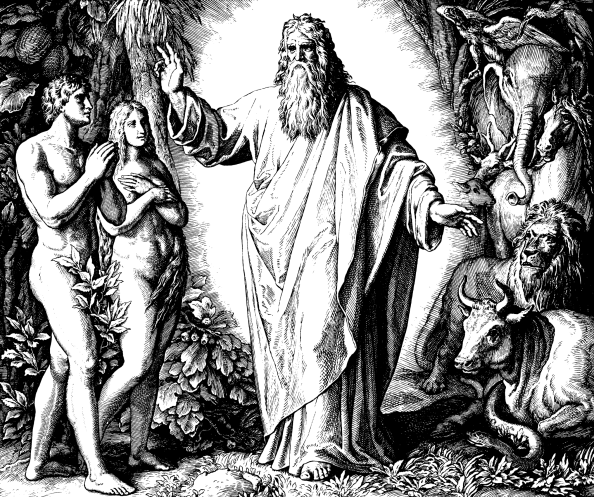

In The Beginning, Node Created os.cpus().length Workers

As reported in the previous two posts, we are boldly going where many have already gone before - into the brave new world of Node.js development. Node has this wonderful aura that makes you feel unique even though the fact that you can find answers to your Node.js questions in the first two pages of... Continue Reading →