Even people in love with Single Page Apps and pooh-poohing server side rendering know that SPAs cannot just materialize in the browser. Something has to deliver them there. Until recently, AngularJS was the most popular client side JS framework, and I have seen all kinds of ADVs (Angular Delivery Vehicles) - from Node.js (N is... Continue Reading →

Pessimism as a Service

Taking human nature into account, rolling with the imperfections of reality, expecting and preparing for the worst pays off tenfold once the projects get serious - Pessimism as a Service in a nutshell.

With React, I Don’t Need to Be a Ninja Rock Star Unicorn

We are not delivering code, but user experiences. Code is just means to an end, and React allows us to focus less on the code and more on what it is supposed to do.

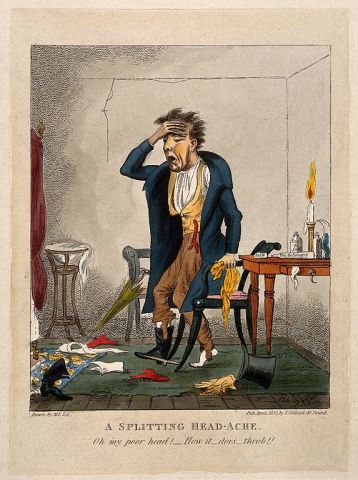

ReactJS: The Day After

The other day I stumbled upon a funny Onion fake news report of the local man whose one-beer plan went terribly awry. Knowing how I professed undying love to ReactJS in the previous article, and extrapolating from life that after every night on the town comes the morning of reckoning, it is time to revisit... Continue Reading →

PayPal, You Got Me At ‘Isomorphic ReactJS’

Following the fruitful series of open source contributions that include Kraken.js, PayPal has done it again with react-engine that brings true isomorphism to modern Web apps.

The Art of Who Does What

It is better to be the one creating the new work than fighting over it.

Same Company, New Job

I am moving on to Cloud Data Analytics. If you are in Toronto and like areas such as Node.js micro-services, front-end Web UIs, REST APIs, come and join me.

Oy With the Gamification Already!

There are many worthy ideas in the Spotify engineering model. Some of them are a refinement of the matrixed models from the past. Most can be used without all the gaming jargon.

Don’t Take Micro-Services Off-Road

Any attempts at nano-services, trying to deploy micro-services manually, using them because they are trendy without real need, or re-using them between multiple systems will result in a disappointment we don’t really need at the moment.

Vive la Révolution App

Whether you are a guy at a reclaimed wood desk overlooking San Francisco's Mission district, or a girl in Africa at a reclaimed computer in a school built by a humanitarian mission, we are approaching the time when what we will only be limited by our creativity, and by our ability to dream and build great apps.