Whether in life or Cloud system design, you should always act to decrease, not increase maintenance cost. Keeping the number of moving parts down helps with that goal.

Microservices On-Premises: An Epic Mismatch

Microservices represent a great solution for a Cloud problem. They are not a solution for EVERY problem. Do not try to use them as a selling point for on-premises software.

Providing for Startups: the New California Gold Rush

California clipper, Wikimedia Commons The California Gold Rush (1848–1855) lasted only 7 years. In that amount of time, it brought 300,000 people to California, invigorated the US economy, played a key role in ushering California to statehood in 1850, and caused a precipitous population decline of Native Californians. One of the most profound lessons of... Continue Reading →

Micro-Services – Fad, FUD, Fo, Fum

But band leader Johnny Rotten didn't sell out in the way that you're thinking. He didn't start as a rebellious kid and tone it down so he could cash in. No, Rotten did the exact opposite -- his punk rebelliousness was selling out. [...] And he definitely wasn't interested in defining an entire movement. From... Continue Reading →

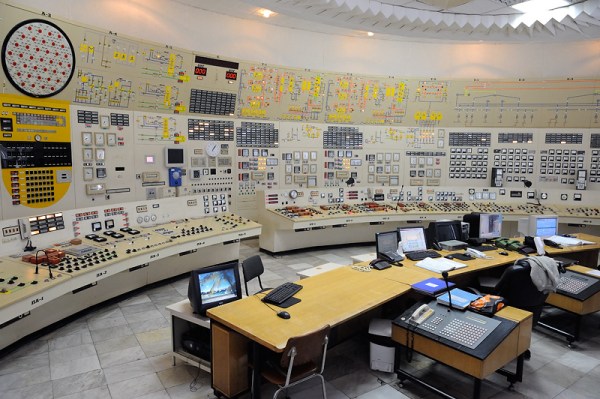

In The Beginning, Node Created os.cpus().length Workers

As reported in the previous two posts, we are boldly going where many have already gone before - into the brave new world of Node.js development. Node has this wonderful aura that makes you feel unique even though the fact that you can find answers to your Node.js questions in the first two pages of... Continue Reading →

The Gryphon Dilemma

In my introductory post The Turtleneck and the Hoodie I kind of lied a bit that I stopped doing everything I did in my youth. In fact, I am playing music, recording and producing more than I did in a while. I realized I can do things in the comfort of my home that I... Continue Reading →

Making Gourmet Pizza in the Cloud

A while ago I had lunch with my wife in a Toronto midtown restaurant called "Grazie". As I was perusing the menu, a small rectangle at the bottom attracted my attention. It read: We strongly discourage substitutions. The various ingredients have been selected to complement each other. Substitutions will undermine the desired effect of the... Continue Reading →