Welcome to our regular edition of ‘Socket.io version 1.0 watch’ or ‘Making sure Guillermo Rauch is busy working on Socket.io 1.0 instead of whatever he does to pay the rent that does nothing for me’. I am happy to inform you that Socket.io 1.0 is now available, with the new logo and everything. Nice job!

With that piece of good news, back to our regular programming. First, a flashback. When I was working on my doctoral studies in London, England, one of the most memorable trivia was a dramatic voice on the London Underground PA system warning me to ‘Mind the Gap’. Since then I seldomly purchase my clothes in The Gap, choosing its more upmarket sibling Banana Republic. JCrew is fine too. A few years ago a friend went to London to study and she emailed me that passengers are still reminded about the dangers of The Gap.

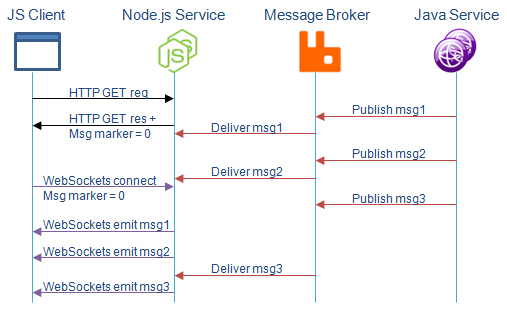

We have recently experienced a curious problem in our usage of WebSockets – our own gap to mind, as it were. It involves a topology I have already written about. You will most likely hit it too, so here it goes:

- A back-end system uses message queue to pass messages about state changes that affect the UI

- A micro-service serves a Web page containing Socket.io client that turns around and establishes a connection with the server once page has been loaded

- In the time gap between the page has been served and the client calls back to establish a WebSockets connection, new messages arrive that are related to the content on the page.

- By the time the WebSockets connection has been established, any number of messages will have been missed – a message gap of sorts.

The gap may or may not be a problem for you depending on how you are using the message broker to pass messages around micro-services. As I have already written in the post about REST/MQTT mirroring, we are using MQTT to augment the REST API. This augmentation mirrors the CRUD verbs that result in state change (CUD). The devil is in the details here, and the approach taken will decide whether the ‘message gap’ is going to affect you or not.

When deciding what to publish to the subscribers using MQ, we can take two approaches:

- Assume subscribers have made a REST call to establish the baseline state, and only send deltas. The subscribers will work well as long as the took the baseline and didn’t miss any of the deltas for whatever reason. This is similar to showing a movie on a cable channel in a particular time slot – if you miss it, you miss it.

- Don’t assume subscribers have the baseline state. Instead, assume they may have been down or not connected. Send a complete state of the resource alongside the message envelope. This approach is similar to breaking news being repeated many times during the day on a news channel. If you are just joining, you will be up to date soon.

The advantages of the first approach are the message payloads. There is no telling how big JSON resources can be (a problem recently addressed by Tim Bray in his fat JSON blog post). Imagine we are tracking a build resource and it is sending us updates on the progress (20%, 50%, 70%). Do we really want to receive the entire Build resource JSON alongside this message?

On the other hand, the second approach is not inconsistent with the recommendation for PUT and PATCH REST responses. We know that the newly created resource is returned in the response body for POST requests (alongside Location header). However, it is considered a good practice to do the same in the requests for PUT and PATCH. If somebody moves the progress bar of a build by using PATCH to update the ‘progress’ property, the entire build resource will thus be returned in the response body. The service fielding this request can just take that JSON string and also attach it to the message under the ‘state’ property, as we are already doing for POST requests.

Right now we didn’t make up our minds. Sending around entire resources in each message strikes us as wasteful. This message will be copied into each queue of the subscribers listening to it, and if it is durable, will also be persisted. That’a a lot of bites to move around while using a protocol whose main selling point is that it is light on the resources. Imagine pushing these messages to a native mobile client over the air. Casually attaching entire JSON resources to messages is not something you want to do in these situations.

In the end, we solved the problem without changing our ‘baseline + deltas’ approach. We tapped into the fact that messages have unique identifiers attached to them as part of the envelope. Each service that is handling clients via WebSockets has a little buffer of messages that are published by the message broker. When we send the page the client, we also send the ID of the last known message embedded in HTML as data. When WebSockets connection is established, the client will communicate (emit) this message ID to the server, and the server will check the buffer if new messages have arrived since then. If so, it will send those messages immediately, allowing the client to catch up – to ‘bridge the gap’. After it has been caught up, the message traffic resumes as usual.

As a bonus, this approach works for cases where the client drops the WebSockets connection. When connection is re-established, it can use the same approach to catch up on the messages it has missed.

As you can see, we are still learning and evolving our REST/MQTT mirroring technique, and we will most likely encounter more face-palm moments like this. The solution is not perfect – in an extreme edge case, the WebSockets connection can take so long that the service message buffer fills up and old messages start dropping off. A solution in those cases is to refresh the browser.

We are also still intrigued with sending the state in all messages – there is something reassuring about it, and the fact that the similarity to PATCH/PUT behavior only reinforces the mirroring aspect is great. Perhaps our resources are not that large, and we are needlessly fretting over the message sizes. On the other hand, when making a REST call, callers can use ‘fields’ and ’embed’ to control the size of the response. Since we don’t know what any potential subscriber will need, we have no choice but to send the entire resource. We need to study that approach more.

That’s it from me this week. Live long, prosper and mind the gap.

© Dejan Glozic, 2014

You should check out Ponte, it seems like it has solutions to some of these problems. It’s a REST MQTT broker with several different backends. It’s also embeddable. It has a retain state on messages to determine which go to the browser.

https://github.com/eclipse/ponte

another thing that could be useful is using operational transforms, ala google wave. There is a fairly decent implementation at share.js, and it has the added benefit of running over browserchannel.

http://sharejs.org

Browserchannel is a higher latency, but still decent enough for many use cases, communication standard that was developed by google to serve the realtime updates from gmail. It actually seems like it has some of the guarantees you are looking for.

What’s nice about it is that it doesn’t require all the websocket jiggery pokery on the web server level, and can run over straight http.

https://github.com/josephg/node-browserchannel

I have seen Ponte already, Matteo made sure I know about it :-).

Thanks for the Browserchannel pointer, will definitely check out.