Dispite many desirable properties, micro-services carry two serious penalties to be contended with: authentication (which we covered in the previous post) and Web page composition, which I intend to address now.

Imagine you are writing a Node.js app and use Dust.js for the V of the MVC, as we are doing. Imagine also that several pages have shared content you want to inject. It is really easy to do using partials, and practically every templating library has a variation of that (and not just for Node.js).

However, if you build a micro-service system and your logical site is spread out between several micro-services, you have a complicated problem on your hands. Now partial inclusion needs to happen across the network, and another service needs to serve the shared content. Welcome to the wonderful world of distributed composition.

This topic came into sharp focus during Nodeconf.eu 2014. Clifton Cunningham presented the work of his team in this particular area, and the resulting project Compoxure they have open-sourced and shared with us. Clifton has written about it in his blog and it is a very interesting read.

Why bother?

At this point I would like to step back and look at the general problem of document component model. For all their sophistication and fantastic feature set, browsers are stubbornly single document-oriented. They fight with us all the time when it comes to where the actual content on the page comes from. It is trivially easy to link to a number of stylesheets and JavaScript files in the HEAD section of the document, but you cannot point at a page fragment and later use it in your document (until Web Components become a reality, that is – including page fragments that contain custom element templates and associated styles and scripts is the whole point of this standard).

Large monolithic server-side applications were mostly spared from this problem because it was fairly easy to include shared partials within the same application. More recently, single page apps (SPAs) have dealt with this problem using client side composition. If everything is a widget/plug-in/addon, your shared area can be similarly included into your page from the client. Some people are fine with this, but I see several flaws in this approach:

- Since there is no framework-agnostic client side component model, you end up stuck with the framework you picked (e.g. Angular.js headers, footers or navigation areas cannot be consumed in Backbone micro-services)

- The pause until the page is assembled in SPAs due to JavaScript downloading and parsing can range from a short blip to a seriously annoying blank page stare. I understand that very dynamic content may need some time to be assembled but shared areas such as headers, footers, sidebars etc. should arrive quickly, and so should the initial content (yeah, I don’t like large SPAs, why do you ask?)

The approach we have taken can be called ‘isomorphic’ – we like to initially render on the server for SEO and fast first content, and later progressively enhance using JavaScript ‘on the fly’, and dynamically load with Require.js. If you use Node.js and JavaScript templating engine such as Dust.js, the same partials can be reused on the client (something Airbnb has demonstrated as a viable option). The problem is – we need to render a complete initial page on the server, and we would like the shared areas such as headers, sidebars and footers to arrive as part of that first page. With a micro-service system, we need a solution for distributed document model on the server.

Alternatives

Clifton and myself talked about options at length and he has a nice breakdown of alternatives at the Compoxure GitHub home page. For your convenience, I will briefly call out some of these alternatives:

- Ajax – this is a client-side MVC approach. I already mentioned why I don’t like it – it is bad for SEO, and you need to stare at the blank page while JavaScript is being downloaded and/or parsed. We prefer to use JavaScript after the initial hit.

- iFrames – you can fake document component models by using seamless iframes. Bad for SEO again, there is no opportunity for cashing (therefore, performance problems due to latency), content in iFrames is clipped at the edges, and problems for cross-frame communication (although there are window.postMessage workarounds). They do however solve the single-domain restriction browsers impose on Ajax. Nevertheless, they have all the cool factor of re-implementing framesets from the 90s.

- Server Side Includes (SSIs) – you can inject content using this approach if you use a proxy such as Nginx. It can work and even provide for some level of caching, but not the programmatic and fine grain control that is desirable when different shared areas need different TTL (time to live) values.

- Edge Side Includes (ESIs) – a more complete implementation that unfortunately locks you into Varish or Akamai.

Obviously for Clifton’s team (and ourselves), none of these approaches quite delivers, which is why services like Compoxure exist in the first place.

Direct composition approach

Before I had an opportunity to play with Compoxure, we spent a lot of time wrestling with this problem in our own project. Our current thinking is illustrated in the following diagram:

The key aspects of this approach are:

The key aspects of this approach are:

- Common areas are served by individual composition services.

- Common area service(s) are proxied by Nginx so that they can later be called by Ajax calls. This allows the same partials to be reused after the initial page has rendered (hence ‘isomorphic’).

- Common area service can also serve CSS and JavaScript. Unlike the hoops we need to go through to stitch HTML together, CSS and JavaScript can simply be linked in HEAD of the micro-service page. Nginx helps making the URLs nice, for example ‘/common/header/style.css’ and ‘/common/header/header.js’.

- Each micro-service is responsible for making a server-side call, fetching the common area response and passing it into the view for inlining.

- Each micro-service takes advantage of shared Redis to cache the responses from each common service. Common services that require authentication and can deliver personalized response are stored in Redis on a per-user basis.

- Common areas are responsible for publishing messages to the message broker when something changes. Any dynamic content injected into the response is monitored and if changed, a message is fired to ensure cached values are invalidated. At the minimum, common areas should publish a general ‘drop cache’ message on restart (to ensure new service deployments that contain changes are picked up right away).

- Micro-services listen to invalidation messages and drop the cached values when they arrive.

This approach has several things going for it. It uses caching, allowing micro-services to have something to render even when common area services are down. There are no intermediaries – the service is directly responding to the page request, so the performance should be good.

The downside is that each service is responsible for making the network calls and doing it in a resilient manner (circuit breaker, exponential back-off and such). If all services are using Node.js, a module that encapsulates Redis communication, circuit breaker etc. would help abstract out this complexity (and reduce bugs). However, if micro-services are in Java or Go, we would have to duplicate this using language-specific approaches. It is not exactly rocket science, but it is not DRY either.

The Compoxure approach

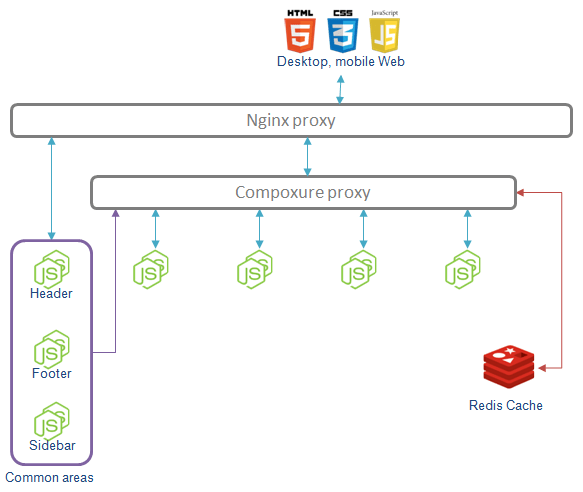

Clifton and guys have taken a route that mimics ESI/SSI, while addressing their shortcomings. They have their own diagrams but I put together another one to better illustrate the difference to the direct composition diagram above:

In this approach, composition is actually performed in the Compoxure proxy that is inserted between Nginx and the micro-services. Instead of making its own network calls, each micro-service adds special attributes to the DIV where the common area fragment should be injected. These attributes control parameters such as what to include, what cache TTLs to employ, which cache key to use etc. There is a lot of detail in the way these properties are set (RTFM), but suffice to say that Compoxure proxy will serve as an HTML filter that injects the content from the common areas into these DIVs as instructed.

In this approach, composition is actually performed in the Compoxure proxy that is inserted between Nginx and the micro-services. Instead of making its own network calls, each micro-service adds special attributes to the DIV where the common area fragment should be injected. These attributes control parameters such as what to include, what cache TTLs to employ, which cache key to use etc. There is a lot of detail in the way these properties are set (RTFM), but suffice to say that Compoxure proxy will serve as an HTML filter that injects the content from the common areas into these DIVs as instructed.

<div cx-url='{{server:local}}/application/widget/{{cookie:userId}}'

cx-cache-ttl='10s' cx-cache-key='widget:user:{{cookie:userId}}'

cx-timeout='1s' cx-statsd-key="widget_user">

This content will be replaced on the way through

</div>

This approach has many advantages:

- The whole business of calling the common area service(s), caching the response according to TTLs, dealing with network failure etc. is handled by the proxy, not by micro-services.

- Content injection is stack-agnostic – it does not matter how the micro-service that serves the HTML is written (in Node.js, Java, Go etc.) as long as the response contains the expected tags

- Even in a system written entirely in Node.js, writing micro-services is easier – no special code to add to each controller

- Compoxure is used only to render the initial page. After that, Ajax takes over and composition service is hit with Ajax calls directly.

Contrasting the approach with direct composition, we identified the following areas of concern:

- Compoxure parses HTML in order to locate DIVs with special tags. This adds a performance hit, although practical results imply it is fairly small

- Special tags are not HTML5 compliant (‘data-‘ prefix would work). If this bothers you, you can configure Compoxure to completely replace the DIV with these tags with the injected content, so this is likely a non-issue.

- Obviously Compoxure inserts itself in front of the micro-services and must not go down. It goes without saying that you need to run multiple instances and practice ZDD (Zero-Downtime Deployment).

- Caching is static i.e. content is cached based on TTLs. This makes picking the TTL values tricky – our approach that involves pub/sub allows us to use higher TTL values because we will be told when to drop the cached value.

- When you develop, direct composition approach requires that you have your own micro-service up, as well as common area services. Compoxure adds another process to start and configure locally in order to be able to see your page with all the common areas rendered. If you hit your micro-service directly, all the DIVs with the ‘cx-‘ properties will be empty (or contain the placeholder content).

Discussion

Direct composition and Compoxure proxy are two valid approaches to the server-side document component model problem. They both work well, with different tradeoffs. Compoxure is more comfortable for developers – they just configure a special placeholder div and magic happens on the way to the browser. Direct composition relies on fewer moving parts, but makes each controller repeat the same code (unless that code is encapsulated in a shared Node.js module).

An approach that bridges both worlds and something we are seriously thinking of doing is to write a Dust.js helper that further simplifies inclusion of the common areas. Instead of importing a module, you would import a helper and then just use it in your markup:

<div>

{@import url="{headerUrl}" cache-ttl="10s"

cache-key="widget:user:{userid}" timeout="1s"}

</div>

Of course, Compoxure has some great properties that are not easy to replicate with this approach. For example, it does not pass TTL values to Redis directly because it would cause the cashed content to disappear after the coundown, and Compoxure perfers to keep the last content past TTL in case the service is down (better to serve slightly stale content than no content at all). This is a great feature and would need to be replicated here. I am sure I am missing other great features and Clifton will probably remind me about it.

Conclusion

In the end, I like both approaches for different reasons, and I can see a team use both successfully. In fact, I could see a solution where both are available – a Dust.js helper for Node.js/Dust.js micro-services, and Compoxure for everybody else (as a fallback for services that cannot or do not want to fetch common areas programmatically). Either way, the result is superior to the alternatives – I strongly encourage you to try it in your next micro-service project.

You don’t even have to give up your beloved client-side MVCs – we have examples where direct composition is used in a page with Angular.js apps and another with a Backbone app. These days, we are spoiled for choice.

© Dejan Glozic, 2014

Dejan, great post – you captured a lot of the thinking behind Compoxure very clearly, two small points though around the caching element.

The first is that the services can respond with any of the normal cache headers (no-cache, max-age) and Compoxure will honour them. If any fragment responds with no-cache it will also then send that back in the overall page response – e.g. to avoid a CDN caching a page that has something user specific in it. This is a recent change and the doc’s haven’t caught up (a better site that describes all of the options and how to get it up and working on a new project is on it’s way – we’re just in the middle of getting the first big project that uses it over the line).

The reason that the cache-key in Redis is configurable in the fragment definition is so that the approach of pre-warming the cache (as you know all the keys) is definitely do-able – so you can have a service that is never actually called by Compoxure, but just regularly places its content into cache – this is an especially good option if the fragment is expensive to produce.

Look forward to hearing how you and your team get on.

Clifton, it was a given that I would miss a fine point or two :-). I actually think that cache-key is a great feature because it can be dynamically computed, and also because you can directly hit Redis with it. Apart the warm up, a Dust.js helper can also hit the same Redis with the same key so that a service using direct composition can coexist with Compoxure and use the same Redis entries.

As for TTLs, my point was more theoretical than practical. If you compute the common area content dynamically, in theory the cache may go out of sync with the service for the portion of time up to TTL. If the service fires an event about the dynamic content change, cache can be updated before TTL countdown goes to 0.

Having said that, dynamically computed content rendered server-side will normally not change every second. If it is changing frequently, a client side solution that kicks in after the page has been rendered is probably a better solution.

I’ve created minimal vanilla JS components where individual DIVS can call out to their own services for data. I find this somewhat nicer than the big opinionated frameworks.. http://run-node.com/even-easier-client-side-rendering-in-vanilla-js/

I agree this is an option, in fact I called it out in the article. However, it requires JavaScript to run and the services you are calling must be either proxied or use CORS. Finally, the content you are fetching on the client will not be available to search engine crawlers. That may nor may not be a problem depending on whether you want the inlined content to be searchable.

This is really interesting. The focus seems to be on replacing HTML, but how would you organize JS and CSS?

For instance, even if you don’t have a SPA you might use Bootstrap to progressively enhance parts of your page. Some microservices may include JS/CSS specific to that microservice as well. Ideally the composition proxy would figure out which JS/CSS to include and handle the dedupe of the JS/CSS that is shared by multiple services and therefore is included multiple times on the composed page.

What if microservices use different versions of shared components?

I’m positive a lot of these issues can be solved by just being careful about what to include, but if the point of microservices is to be able to have different teams develop and release them independently I’m curious to hear how you would approach these things.

Roy, good questions. Our focus was on HTML because until Web Components make HTML fragments easy, it will continue to be the hardest part. You can simply add CSS link in HEAD for the shared area, and load JS required by it at the bottom of your page. We use Require.js to control JS namespaces and avoid collisions. When it comes to CSS, since we don’t know what the page will use, our current approach is to use vanilla JS and carefully written CSS to avoid clashes. This is limiting somewhat (you can’t use your favorite client side MVC for the common areas), but it also helps because there is less to load.

Of course, you can ignore neutrality and simply assume the entire system is using the same version of Bootstrap, and make dependencies to it in the imported HTML. This sounds like a limitation, but note that common areas and pages that include them need to coordinate visually anyway. In fact, I have written about this exact problem already ().

I don’t claim we have this problem solved, and will actually ask Clifton on the way Compoxure handles it (I think they have some solution that helps to a degree).

This article is so useful! Thank you for sharing! Have you had any new findings since 2014 or are these still the processes you’d use?

Hey, thanks! We are actually still using this, it has proven remarkably usable in production. Out of the two, we kept using the first and did nothing with Compoxture. We don’t even bother with all the Redis/cache stuff – we use in memory LRU cache to keep the header in memory for 5 minutes. Now that you mentioned it, it would probably help if we stored it in a shared Redis instance to avoid keeping multiple copies of the same thing around, but we are lazy (and don’t want to keep headers in Redis to avoid Redis bloat).

Thanks for the response! This is great to know! I’ve been loving all your articles by the way!